“Flash” Storage Will Be Cheap – The End of the World is Nigh

A couple of weeks ago I tweeted a projection that the $/GB for flash drives will meet the $/GB for hard drives within 3-4 years. It was more of a feeling based upon current pricing with Moore’s Law applied than a well researched statement, but it felt about right. I’ve since been thinking some more about this within the context of current storage industry offerings from the likes of EMC, Netapp and Oracle, wondering what this might mean.

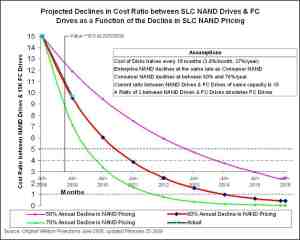

First of all I did a bit of research – if my 3-4 years guess-timate was out by an order of magnitude then there is not much point in writing this article (yet). I wanted to find out what the actual trends in flash memory pricing look like and how these might project over time, and I came across the following article: Enterprise Flash Drive Cost and Technology Projections. Though this article is now over a year old, it shows the following chart which illustrates the effect of the observed 60% year on year decline in flash memory pricing:

This 60% annual drop in costs is actually an accelerated version of Moore’s Law, and does not take into account any radical technology advances that may happen within the period. This drop in costs is probably driven in the most part by the consumer thirst for flash technology in iPods and so forth, but naturally ripples back up into the enterprise space in the same way that Intel and AMD’s processor technologies do.

So let’s just assume that my guess-timate and the above chart are correct (they almost precisely agree) – what does that mean for storage products moving forward?

Looking at recent applications of flash technology, we see that EMC were the first off the blocks by offering the option of relatively large flash drives as drop-in replacements for their hard drives in their storage arrays. Netapp took a different approach of putting the flash memory in front of the drives as another caching layer in the stack. Oracle have various options in their (formerly Sun) product line and a formalised mechanism for using flash technology built into the 11g database software and into the Exadata v2 storage platform. Various vendors offer internal flash drives that look like hard drives to the operating system (whether connected by traditional storage interconnects such as SATA or by PCI Express). If we assume that the cost of flash technology becomes equivalent to hard drive storage in the next three years, I believe all these technologies will quickly become the wrong way to deploy flash technology, and only one (Oracle) has an architecture which lends itself to the most appropriate future model (IMHO).

Let’s flip back to reality and look at how storage is used and where flash technology disrupts that when it is cheap enough to do so.

First, location of data: local or networked in some way? I don’t believe that flash technology disrupts this decision at all. Data will still need to be local in certain cases and networked via some high-speed technology in others, in much the same way as it is today. I believe that the networking technology will need to change for flash technology, but more on that later.

Next, the memory hierarchy: Where does current storage sit in the memory hierarchy? Well, of course, it is at the bottom of the pile, just above tape and other backup technologies if you include those. This is the crucial area where flash technology disrupts all current thinking – the final resting place for data is now close or equal to DRAM memory speeds. One disruptive implication of this is that storage interconnects (such as Fibre Channel, Ethernet, SAS and SATA) are now a latency and bandwidth bottleneck. The other, potentially huge, disruption is what happens to the software architecture when this bottleneck is removed.

Next, capacity: How does the flash capacity sit with hard drive capacity? Well that’s kind of the point of this posting… it’s currently quite a way behind, but my prediction is that they will be equal by 2013/2014. Importantly though, they will then start to accelerate away from hard drives. Given the exponential growth of data volumes, perhaps only semiconductor based storage can keep up with the demand?

Next, IOPs: This is the hugely disruptive part of flash technology, and is a direct result of a dramatically lowered latency (access time) when compared to hard disk technology. Not only is the latency lowered, but semiconductor-based storage is more or less contention-free given the absence of serialised moving parts such as a disk head. Think about it – the service time for a given hard drive I/O is directly by the preceding I/O and where the head was left on the platter. With solid-state storage this does not occur and service times are more uniform (though writes are consistently slower than reads).

These disruptions mean that the current architectures of storage systems are not making the most of semiconductor-based storage. Hey, why do I keep calling it “semiconductor-based storage” instead of SSD or flash? The reason is that the technologies used in this area are changing frequently, from DRAM-based systems to NOR-based flash to NAND based flash to DRAM-fronted flash; Single-level cells to Multi-level cells; battery-backed to “Super Cap” backed. Flash, as we know it today, could be outdated as a technology in the near future, but “semiconductor-based” storage is the future regardless.

I think that we now need technologies that look more like Oracle Exadata v2, with low-latency RDMA interfaces directly into the Operating System/Database. However, they need to easily and natively support other types of storage (unstructured data such as files, VMware datastores and so forth). The Exadata architecture lends itself well to changes in this area in both hardware trends and access protocols.

Perhaps more importantly, we are also only just beginning to understand the implications in software architecture for the disrupted memory hierarchy. We simply cannot continue to treat semiconductor-based storage as “fast disk” and need to start thinking, literally, outside the box.

Explore posts in the same categories: Oracle, Performance, SaneSAN2010, StorageTags: exadata, flash, Oracle, ssd, Storage

You can comment below, or link to this permanent URL from your own site.

May 29, 2010 at 9:18 pm

What are the implications for index data structures? Since B-Tree indexes implicitly assume “slow” storage, do you foresee major changes inside the RDBMS kernel and/or the the type of indexes that will be used 5 years from now?

BTW, I’m relatively new to Oracle and I have found your “old” book a great resource – many thanks!

May 29, 2010 at 9:42 pm

Hey Brett,

Glad you still like the ‘old’ book 🙂

In response to your question, indexes are still a more efficient way to get to an optimised subset of data, regardless of whether a full table scan is now faster or not. Everything is relative after all – if you can FTS in 0.5s but use an index path in 0.1s, it’s still better 🙂 The implementation of the index may need to be re-evaluated, and the cache architecture is probably wrong, but the concept of using an index is almost certainly correct in my opinion.

Cheers

James

May 30, 2010 at 11:03 pm

But the trade-off in indexes is a slower write speed (including write contention on ‘date_created’ type indexes). Plus you often followup an index access with a table access. There will be edge cases where the benefit, for writes, of dropping indexes will outweigh some query impacts.

May 30, 2010 at 6:03 pm

Doesn’t this mean in the end that if the last mechanical part is gone the need for centralized data storage can be reduced enormous?

May 30, 2010 at 9:55 pm

Thought-provoking post. Thanks for that.

I can see OS caches becoming redundant, for example. With all that means in terms of OS and app design. Assuming of course the problem of transmission delays is resolved.

On the negative side: I’m not sure the SSD problems after a large number of writes have yet been satisfactorily solved. They likely will, but for the time being SSDs are reserved for special applications where ability to re-write large quantities of data is needed.

Still: very interesting years ahead!

May 30, 2010 at 11:30 pm

and of course I meant: “…large quantities of data is NOT needed”…

Jeeesh…

June 2, 2010 at 2:54 pm

Interesting stuff James!

I suppose “semiconductor-based storage” will tip the storage/processor balance again and give some of the latest incredibly capable chips something to keep them busy! (i.e. with the benefit of massively improved throughput).

I’m not sure about Michael’s comment – now that people have seen the benefits of centralized storage (especially for provisioning) I don’t think there’s any way back… the genie is out of the bottle. Diskless/stateless compute nodes plus central persistent storage is the way to go IMO 🙂

June 2, 2010 at 4:18 pm

Simon,

I agree in the most part about centralised data. The case breaks down for certain things like NoSQL and map/reduce architectures, though!

Cheers

James

July 12, 2010 at 11:54 am

[…] out what I am writing, I’ve just spotted someone did a better job of this before me. Over to James Morle who did a fantastic post on this very topic back in May. Stupid me for not checking out his blog more often. Jame also […]

August 23, 2010 at 1:12 pm

[…] that will form part of the paper, and I will write a few more over the next few weeks. Check out this one, and keep an eye on my blog for the next few weeks. The first one out the bag is “Serial to […]